Robert Baraldi

University of Washington

As a North Carolina State University mathematics student, Robert Baraldi applied what he learned to biology and genetics, largely because his mentor, the late Harvey Thomas Banks, was interested in those subjects.

But as Baraldi continued working on things like genomics, he found himself “increasingly drawn to the actual math instead of the applications. I really got into writing algorithms.”

So when he began his applied mathematics Ph.D. at the University of Washington, Baraldi joined Aleksandr Aravkin’s group to work on geophysics, a field with interesting problems requiring novel algorithms. “I feel like this geology-seismology stuff scratches that (algorithm-writing) itch,” Baraldi says.

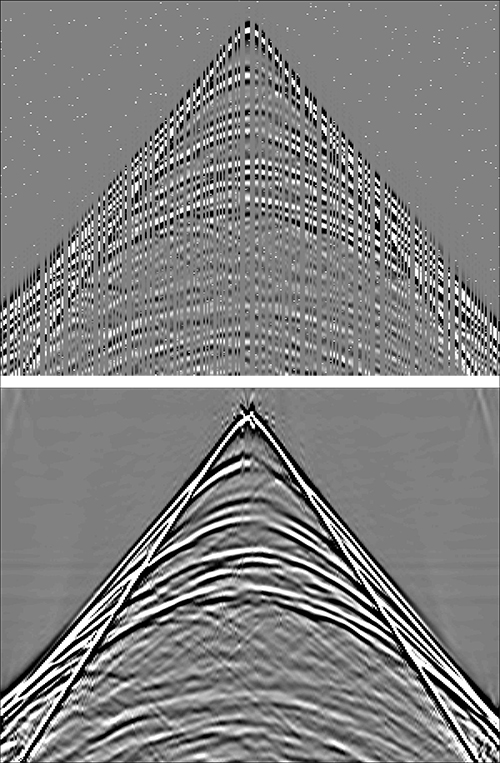

He focuses specifically on inverse problems, which start with observations, such as images, and seek to identify conditions that produced them. It’s difficult because the observed data can be sparse or unreliable, and meaningless noise can obscure key clues.

Despite the geophysics application, the methods Baraldi develops are broadly applicable. Inverse problems are everywhere. “People need to figure out what the governing parameters are for the data they've observed. In the age of big data, everyone has more than their fair share” of information to explore.

Getting to those parameters involves optimization, which seeks to find a minimum value from a host of possibilities. It’s like a hiker who’s seeking the lowest valley in a mountain range. “You’re at the top of the hill and you want to get to the bottom” in the fastest way, Baraldi says.

Such problems, however, can lack vital information to help find the answer and avoid landing in a low spot that isn’t the real minimum. To overcome these irregular and difficult surfaces, Baraldi uses techniques that capitalize on the available data and make assumptions about them while relaxing solution constraints.

In one project, Aravkin’s group works with UW geophysicists who use earthquake sensor data and other information to map molten rock flow beneath Mount St. Helens. Baraldi helped develop an algorithm that combines readings from multiple instruments with physics knowledge to boost accuracy from a particular sensor.

Baraldi also worked on an inverse problem for his 2018 Lawrence Berkeley National Laboratory practicum with DOE CSGF alumnus Matthew Zahr, now at the University of Notre Dame. The project related to magnetic resonance imaging (MRI) data that captured random fluid flow. As in the Mount St. Helens project, the goal is to resolve image features in high fidelity.

The team worked with velocity data to find parameters for the flow entering the MRI region. Results were cast as a distribution of possible solutions that the parameters could produce. Algorithms sampled the distribution to find the optimal result – a lengthy process. “If you have to run a model every time you sample and your model can take a day on a supercomputer, it’s pretty difficult,” Baraldi says.

Baraldi and his colleagues used implicit sampling, which focuses on a distribution segment where an optimal solution is most probable. The researchers also implemented the method on a reduced-order model – a simpler, faster version of the governing equations. Finally, Baraldi planned to integrate uncertainty quantification calculations to tell researchers how much they should trust the results.

The team had begun testing its approach when Baraldi’s practicum ended but continues to collaborate.

Baraldi’s second practicum, begun in person at Argonne National Laboratory in February 2020 but completed virtually, addressed inverse problems in a machine-learning context. With Sven Leyffer and DOE CSGF alumnus Stefan Wild, he developed an alternate way to tackle irregular parameter surfaces like those in the seismic calculations – ones with false minimums.

Instead of going directly down the slope to seek the deepest valley, the technique the team tested, alternating direction method of multipliers (ADMM), splits the calculations into simpler parts and solves them iteratively.

But ADMM, which is widely used in machine learning, doesn’t converge on a correct answer for some problems – “and of course these problems are the ones people care about,” Baraldi says. He applied filter methods, which compare the solution to problem constraints and seek the minimum based on performance, to force ADMM to converge.

“We’re hoping to at least guide convergence” with the technique or “shed light onto why it doesn’t converge” for these problems. The method was working on simple tasks when his practicum ended. That work continues.

Even with the added projects, Baraldi expects to graduate by mid-2021 and hopes to land a postdoctoral research fellowship.

Image caption: Interpolation and denoising results for a common source extracted from a seismic line simulated using a two-dimensional model. The top figure represents missing data with non-Gaussian, noisy outliers; the bottom figure represents image reconstruction with nonsmooth cost-function and constraint. Credit: R. Baraldi, R. Kumar and A. Aravkin, "Basis Pursuit Denoise With Nonsmooth Constraints," in IEEE Transactions on Signal Processing, vol. 67, no. 22, pp. 5811-5823, 15 Nov.15, 2019, doi: 10.1109/TSP.2019.2946029.