New on DEIXIS online: Analysis Restaurant

High-performance computing (HPC) systems are like a burgeoning restaurant that can’t widen its service window fast enough to keep pace with its expanding dining room. Computer clusters crunch numbers while ambitious simulations and experiments generate ever bigger and more complex data-analysis orders.

To save HPC resources for the computational heavy lifting of running simulations and managing major experiments, the resulting data are analyzed offline. They’re read from storage and transmitted through a narrow-bandwidth window to a cluster outside the high-performance machine. After the cluster completes the analysis, the final data must then be written back into storage through the same small portal.

Soon, data-analysis orders pile up, waiting their turn for processing. HPC machines in this position can never reach their full potential, no matter how fast processing power increases. Much of their time is spent just moving data from one place to another, and the problem will only get worse as HPC rises toward exascale – a million trillion calculations per second.

Part of the solution may be akin to waiters preparing meals at the tables, avoiding the service window bottleneck altogether.

That’s the idea behind AnalyzeThis, a novel data storage and analysis system developed by Sudharshan Vazhkudai and colleagues. Vazhkudai leads the Technology Integration Group at the National Center for Computational Sciences at Oak Ridge National Laboratory. With colleagues at Virginia Tech and Lawrence Berkeley National Laboratory, the team is souping up the computational power of solid-state devices (SSDs) – an approach they call active flash.

“You have a bandwidth bottleneck exacerbated by the need to do data wrangling,” Vazhkudai says. “Why not do the analysis on the storage component, where the data already resides?”

The group will describe its approach Nov. 17 during a session on scalable storage systems at the SC15 supercomputing conference in Austin, Texas.

AnalyzeThis grew out of the group’s work exploring ways to use SSDs in input/output (I/O) and in memory extension. At once, a third line of inquiry naturally emerged: How might SSDs be used for in situ data analysis – as the simulation runs?

Each SSD is already equipped with a controller: a multicore processor that manages storage and retrieval of data. The research team realized that, when the controllers are not busy managing I/O, they might also execute data analysis kernels.

In the AnalyzeThis system, active flash devices within a high-performance machine crunch numbers while the machine itself is busy computing and not using the flash devices for I/O. AnalyzeThis directs data to leapfrog from one active flash system to another, allowing each device to automatically execute predetermined analytics kernels on any data it receives. A file-system layer gives users the ability to dictate which analytics are done and to track the progress of data through the system. In the end, the devices complete much of the analysis without ever transferring the data from the HPC system. In fact, the data are moved little among the active flash devices.

In Vazhkudai’s words, this approach makes data analyses “first-class citizens in the storage system.” The data and analyses are blended into the storage system so that the location reflects the analytics performed and the analytics indicate the location (provenance). The team calls their system an “analysis workflow-aware storage system.”

Read more at DEIXIS: Computational Science at the National Laboratories, the online companion to the DOE CSGF’s annual print journal.

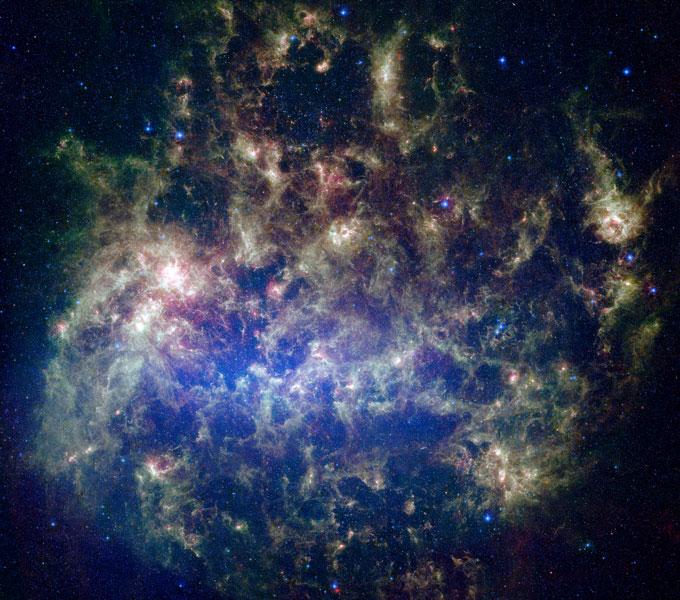

Image caption: The group behind AnalyzeThis tested its system on complex, real-word workflows, including Montage, a program used to produce astronomical images like this, the Large Magellanic Cloud. This mosaic compiled from the NASA Spitzer Space Telescope was made as part of a project called SAGE (Surveying the Agents of a Galaxy’s Evolution). Courtesy of NASA/JPL-Caltech/M. Meixner (Space Telescope Science Institute) and the SAGE legacy team.